Artificial Intelligence (AI) is fundamentally reshaping the security landscape of product data in the United States. AI-driven technologies are enhancing data protection, threat detection, and access controls, offering organizations unprecedented capabilities to safeguard sensitive product information. However, the integration of AI also introduces new security vulnerabilities, regulatory complexities, and ethical challenges.

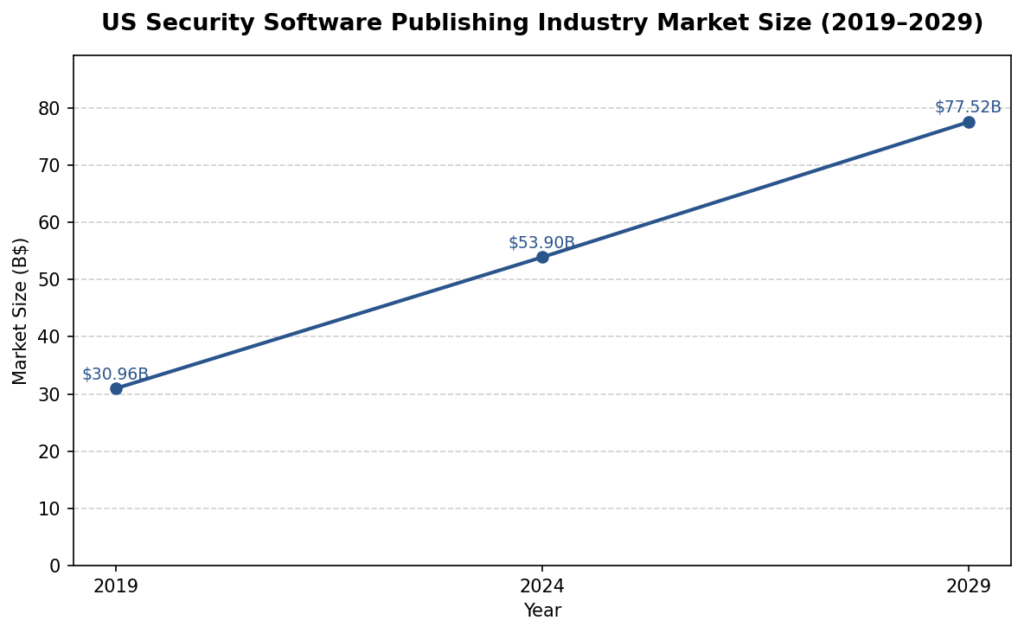

The US product data security landscape is undergoing rapid transformation due to the proliferation of AI technologies. AI is now embedded across the product data lifecycle—from development and design to distribution and customer interaction—enabling organizations to automate threat detection, streamline access controls, and enhance compliance with evolving data protection regulations. The security software publishing industry, valued at $53.9 billion in 2024 and projected to grow at a 7.5% CAGR through 2029, is at the forefront of this shift, with leading firms integrating AI and machine learning into their cybersecurity offerings to address increasingly sophisticated cyber threats and regulatory demands 1.

AI’s impact is being felt beyond the security software publishing sector, touching data processing and hosting, retail, and other sectors that rely heavily on large-scale product data management. The rise of AI-powered tools in these industries reflects a new paradigm in data security where anomaly detection, behavioral analytics, and autonomous response systems increasingly become standard practice. The sheer volume and complexity of digital product data now require scalable, intelligent security frameworks to ensure data integrity, regulatory compliance, and business continuity 2.

Moreover, the integration of AI has led to the adoption of advanced concepts such as Zero Trust Architecture, edge computing, and federated learning. These innovations are not only improving the efficacy of threat prevention and detection, but are also necessitating more complex governance structures, as legal, regulatory, and ethical boundaries continue to shift. As companies strive for operational efficiency and competitive advantage, balancing AI-driven innovation with robust security and privacy measures remains a central challenge in today’s evolving market 3.

Market Segmentation

AI-driven security solutions are being adopted across various sectors, including enterprise software, data processing and hosting, retail, and regulated industries such as finance and healthcare. Enterprise software publishers like Oracle and Salesforce are embedding AI into their platforms to provide predictive security and zero trust architectures, while data processing and hosting providers such as Amazon Web Services and Microsoft Azure are leveraging AI for real-time threat detection and edge computing 2 4. In retail, AI-powered shopping assistants and digital twins are transforming product data management and security, while regulated sectors are adopting AI to automate compliance and risk management 5 6.

In addition, smaller and midsize companies (100-1,000 employees) are capitalizing on AI adoption due to their agility and fewer legacy constraints, enabling them to implement AI-driven access controls and security solutions more rapidly than larger incumbents. These organizations are better positioned to pilot, iterate, and operationalize AI risk mitigation frameworks, contributing to industry-wide acceleration in the adoption of AI-native security best practices 7. The market is also seeing substantial variation across verticals: highly regulated sectors such as banking and healthcare deploy AI security tools to automate threat detection, ensure real-time compliance with privacy mandates, and swiftly mitigate fraud; in contrast, the retail sector’s use of AI is focused on customer data handling, secure transaction processing, and fraud avoidance in AI-powered shopping ecosystems 8.

Companies operating within these diverse market segments require tailored AI security solutions that address sector-specific challenges, such as managing compliance in health data environments (HIPAA), transaction risk in banking (PCI-DSS, GLBA), and product authenticity and anti-fraud in retail. This increasing segmentation is pushing leading vendors to offer modular, interoperable security solutions that can be customized to industry needs while maintaining a unified approach to threat detection and data protection.

Market Segmentation by AI Security Adoption

| Sector | Primary AI Security Applications | Key Compliance Concerns | Illustrative Technologies |

| Enterprise SW | Predictive security, zero trust, threat analytics | Data privacy, CCPA, GDPR | Salesforce AI, Oracle ZPR, AI SIEM |

| Data Hosting | Edge computing, anomaly detection, DDoS defense | Data locality/regional laws | AWS Shield, Homomorphic Encryption |

| Retail | Digital twins, AI shopping assistants, fraud detection | Payment security, CCPA | Amazon Rufus, Klarna AI, TensorFlow |

| Finance/Healthcare | Automated compliance, risk monitoring, fraud prevention | HIPAA, PCI-DSS, GLBA | AI MDM, ML for fraud, XDR/XAI |

How is the Market?

Major players such as Microsoft, Oracle, CrowdStrike, and Zscaler are investing heavily in AI-driven security technologies, often through strategic acquisitions to accelerate the integration of advanced threat detection and access control capabilities 1 9 10. Midsize companies, due to their agility and manageable IT complexity, are also emerging as leaders in operationalizing AI-driven security frameworks 7. The market is further shaped by the rise of specialized AI security vendors and the growing importance of interoperability among security tools 11.

As AI-based security tools become table stakes, the market is seeing a flurry of mergers and acquisitions, with established players acquiring niche startups to fill specific vulnerabilities in the AI security value chain—such as identity protection, endpoint monitoring, and compliance automation. For instance, major deals like Palo Alto Networks’ $25 billion acquisition of CyberArk and SentinelOne’s $250 million acquisition of Prompt Security highlight the industry’s recognition that identity security and AI app protection are strategic priorities 12. Partnerships like Oracle and AWS’s collaboration on next-generation network security technologies underscore the industry’s pivot toward cross-platform AI security solutions as clients demand robust, integrated security stacks 2.

The competitive landscape is increasingly influenced by a company’s ability to deliver explainable, scalable, and rapidly deployable AI security tools. Vendors are differentiating themselves by providing end-to-end visibility across data, models, applications, and AI infrastructure, and by incorporating features such as real-time compliance monitoring, interpretability frameworks, and seamless integration with cloud and edge environments 13. With thousands of AI security startups globally but just a handful of full-stack, interoperable security platforms, the market is poised for further consolidation as enterprises seek holistic solutions to complex AI-driven threats.

Top U.S. AI Security Vendors and Capabilities (2025)

| Company | Core Capabilities | Notable AI Security Features |

| Microsoft | Endpoint/cloud defense, governance | Intelligent Security Graph, Copilot |

| Oracle | Network security, AI analytics, hybrid cloud | Zero Trust Packet Routing (ZPR) |

| CrowdStrike | Endpoint/XDR, threat intelligence | Charlotte AI, adaptive threat hunting |

| Zscaler | Cloud access, Zero Trust, managed detection | AI-based SASE, Red Canary integration |

| Many Startups | Point AI solutions (model risk, API security) | Model monitoring, compliance bots |

Regulations – How the Government is Playing at Part in this?

While there is no comprehensive federal AI regulation, a patchwork of state laws—such as California’s CCPA and new AI transparency requirements—impose stringent obligations on organizations handling sensitive data 14 15 16. Recent federal executive orders aim to streamline AI regulation and preempt state laws, but legal challenges and ongoing state-level activism create compliance uncertainty 17 18. Regulatory frameworks increasingly emphasize transparency, explainability, and accountability in AI systems, with mandates for algorithmic impact assessments, data provenance, and audit trails 19 20.

State-level advancements include mandatory privacy risk assessments, AI impact statements, and new categories of “high-risk” AI applications, particularly in employment, finance, health, and critical infrastructure. California’s legislative activity continues to set de facto standards, requiring transparency regarding AI training data and provenance, with laws such as the AI Transparency Act and Assembly Bill 2013 mandating disclosures and watermarking for AI-generated content 16. New York and Colorado have enacted laws compelling expedited breach reporting for AI-driven incidents, underscoring a trend toward rapid incident response expectations 21.

At the federal level, executive action in late 2025 attempted to preempt state regulations through a unified compliance framework, but legal opinions remain divided on the enforceability of this order, with multiple states asserting their right to maintain local AI legislation for consumer protection and innovation balancing 18. Industry experts anticipate that organizations will need to maintain “dual compliance” (federal and state) for the foreseeable future, increasing operational complexity, especially for companies operating across multiple jurisdictions 17.

Globally, regulatory trends are also relevant to US-based companies with international operations, as frameworks like the EU AI Act and similar mandates in Canada and Japan impose extraterritorial obligations on AI-enabled businesses regarding transparency, fairness, and data protection 22. The importance of documenting AI development processes, model training data, explainability, and decision lineage is growing, as both regulators and clients demand defensible, ethical, and transparent AI operations.

U.S. Regulatory Environment for AI-Driven Product Data Security (2025)

| Regulatory Level | Key Mandates and Trends | Compliance Challenges |

| Federal | Executive orders; draft FTC guidance | Uncertain preemption, lagging law |

| State | CCPA, AI Transparency Act, NY RAISE Act | Patchwork rules, dual compliance |

| International | EU AI Act, GDPR, extraterritorial scope | Cross-border enforcement, data flow |

Technological Advancements

AI technologies are driving significant advancements in data protection, threat detection, and access controls. Machine learning algorithms enable real-time anomaly detection, predictive analytics, and automated incident response, reducing the time to identify and mitigate threats 23 24 25. Zero Trust Architectures (ZTA) and AI-powered access control systems enforce continuous verification of users and devices, mitigating risks from compromised credentials and insider threats 26 1. Innovations such as homomorphic encryption and edge computing further enhance data confidentiality and real-time responsiveness 4. However, the sophistication of AI models also necessitates scalable infrastructure, including advanced cloud and quantum computing 2.

The application of AI in threat detection now relies on deep learning, behavioral pattern recognition, and dynamic baselining, which enables organizations to spot emerging and unknown attack vectors—so-called zero-day threats—quicker than ever. Real-world deployments show improvements such as faster breach detection/response times, reduced false positives, and the ability to automate remediation actions across distributed environments 27. These capabilities not only improve security outcomes, but also relieve the burden on human security analysts, who can then focus on higher-level risk management.

Another emerging trend is the adoption of privacy-preserving techniques such as differential privacy, federated learning, and explainable AI (XAI) to mitigate risks of bias and unintentional disclosure of sensitive information in AI-driven processes. These technologies enable organizations to reap the benefits of AI-powered automation while respecting privacy and compliance obligations 28. Furthermore, the expansion of hybrid cloud and edge AI deployments allows organizations to keep sensitive product data within local environments when required by law or business need, while still accessing the elasticity and analytic power of the cloud 29.

Quantum-safe cryptography and advanced data provenance solutions are also gaining prominence, responding to the dual threat of quantum computing to classical encryption and the regulatory push for end-to-end traceability in AI decision-making 30 31. This technological evolution has created a virtuous cycle of improving defense capabilities and more resilient product data management.

Future Forecast

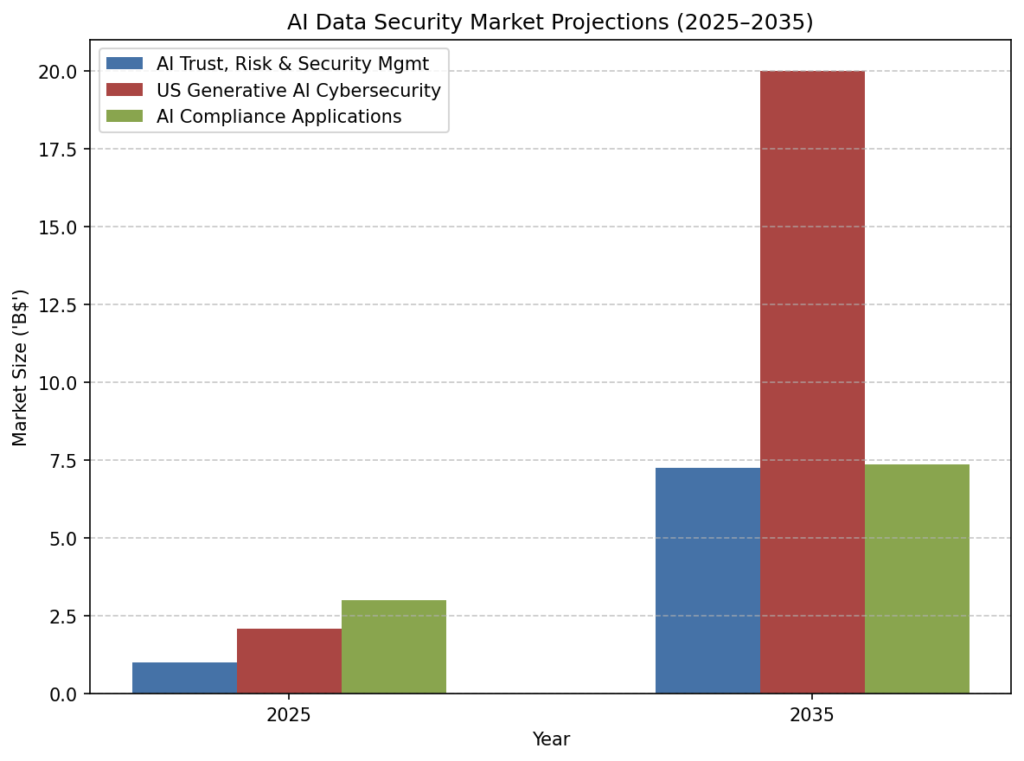

The US AI trust, risk, and security management market is projected to reach $7.24 billion by 2035, with a CAGR of 21.77% from 2026 42. The adoption of AI in cybersecurity is expected to accelerate, driven by the need to counter increasingly sophisticated threats and comply with evolving regulations. The industry will see continued investment in AI infrastructure, hybrid cloud-local deployments, and the development of explainable and transparent AI systems. Regulatory harmonization and the maturation of AI governance frameworks will be critical to sustaining innovation and trust 13 20.

Looking ahead, we expect to see deeper integration between AI-powered security tools and business operations, with dynamic data classification, automated incident management, and continuous risk assessments embedded throughout the product data lifecycle. As quantum computing matures, quantum-resistant cryptography and new AI-driven identity management solutions will take center stage in protecting sensitive product data 30 31. Simultaneously, AI governance will become standard practice, with mandated documentation, bias audits, and traceability dovetailing with client and regulatory expectations.

Given the increasing attack surface and velocity of AI-driven threats, organizations will need to prioritize AI-native security measures, invest in scalable workforce training, and engage proactively with regulators to shape responsible AI security standards. Companies that harness the full spectrum of technical, operational, and compliance best practices will be best positioned for sustainable competitive advantage in the AI-driven market.

AI Data Security Market Projections (2025–2035)

| Segment | 2025 Market ($B) | 2035 Proj. ($B) | CAGR % | Growth Drivers |

| AI Trust, Risk & Security Mgmt | $1.01 | $7.24 | 21.77% | Regulation, threat sophistication |

| US Generative AI Cybersecurity | $2.09 | ~$20+ | 23–41% | AI threats, compliance, infra |

| AI Compliance Applications | $3.0+ | $7.37 | 10-13% | Automated audits, new mandates |

Strategic Insights

AI is both a powerful enabler and a source of new risks in product data security. Organizations that successfully leverage AI do so by embedding security throughout the AI lifecycle, from data ingestion and model training to deployment and monitoring 43 44. The most effective strategies combine technical controls—such as encryption, access management, and continuous monitoring—with robust governance, transparency, and workforce training 16 45. Explainability and auditability are emerging as competitive advantages, enabling organizations to meet regulatory requirements and build stakeholder trust 20.

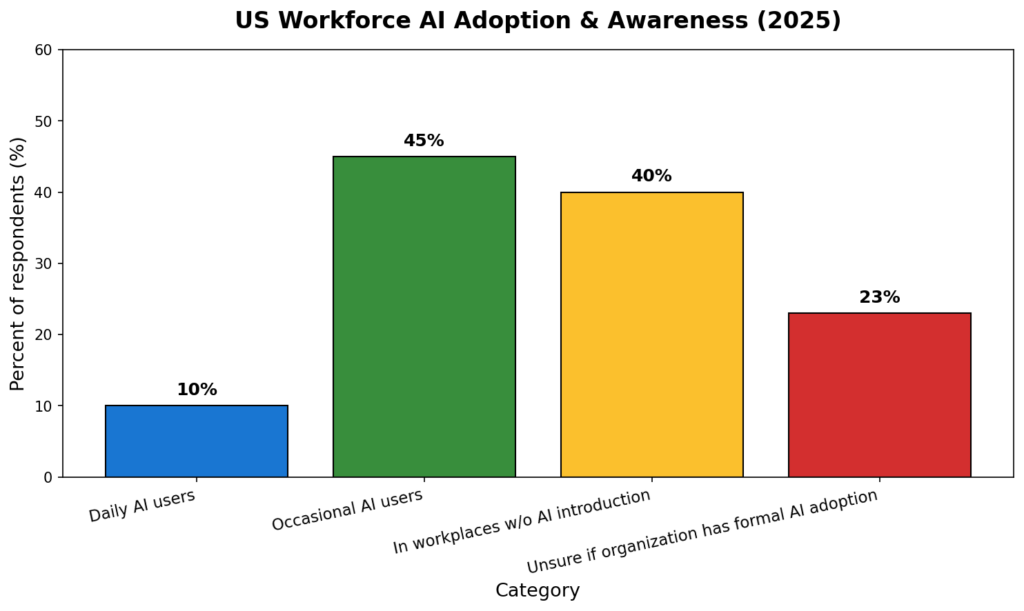

A multi-layered approach is crucial—combining Zero Trust principles, automated anomaly detection, AI-specific threat modeling, and regular security audits with clear governance frameworks and feedback mechanisms for model “drift” or ethical issues. Employee training in cognitive supervision and ethical AI practices must complement technical defenses, as human oversight is vital for detecting unpredictable or biased AI outputs and preventing data leakage from unsanctioned tool usage 46 38.

The need for transparency and explainability is particularly critical as regulations and client demands increasingly require traceable, interpretable AI systems. Implementing model lineage, applying post-hoc interpretability tools, and documenting model bias audits position organizations to comply with existing and future mandates while fostering organizational trust and resilience against AI-specific vulnerabilities 20.

Recommendations

Adopt Zero Trust and Fine-Grained Access Controls: Implement continuous verification of users and devices, enforce least-privilege access, and use adaptive privilege management to minimize unauthorized exposure of sensitive product data 1 26 35.

Invest in AI-Driven Threat Detection and Response: Deploy machine learning-based tools for real-time anomaly detection, automated incident response, predictive analytics, and behavioral monitoring to reduce detection and remediation times 23 25 24.

Strengthen Data Governance and Provenance: Maintain detailed records of data sources, model lineage, model weights, and access logs at all stages of the AI and product data lifecycle to support compliance and enhance auditability 20 16 44.

Ensure Regulatory Compliance Across Jurisdictions: Monitor both federal and state regulatory developments, conduct algorithmic impact and data protection assessments, implement privacy-by-design principles, and prepare for potential cross-border compliance requirements 19 47 15 22.

Enhance Workforce Training and Oversight: Upskill employees in AI security, cognitive supervision, and ethical risk management; provide clear usage policies for sanctioned vs. unsanctioned AI, and emphasize the importance of human oversight in AI workflows 46 45 48.

Prioritize Explainability and Transparency: Integrate interpretability tools (e.g., SHAP, LIME), instrument model audit trails, and regularly document and review explanation tests to support explainable AI and compliance with regulatory and stakeholder requirements 20.

Continuously Monitor and Update Security Posture: Implement automated monitoring for unexpected AI model behavior, perform regular security audits, and adapt risk mitigation strategies to address evolving threats and regulatory changes 49 50.

Prepare for Future Technologies: Anticipate and plan for the adoption of quantum-resistant cryptography, privacy-preserving AI techniques like federated learning, and dynamic edge/cloud deployment strategies to maintain robust protection for sensitive product data 30 4 31.

Best Practices Checklist for AI-Enhanced Product Data Security

| Practice Area | Recommended Actions |

| Access Control | Zero Trust, fine-grained privileges, adaptive revocation |

| Threat Detection | AI-powered anomaly/scenario monitoring, SOAR integration |

| Data Governance | Model/data lineage, encryption, regular audits |

| Compliance | Dual monitoring (federal/state), DPIAs, documentation |

| Training | Cognitive supervision, upskilling, shadow AI protocols |

| Explainability | Audit trails, interpretability tools, model reviews |

| Emerging Tech | Quantum-ready, federated learning, privacy-preserving AI |

Appendices

[1] 51121F Security Software Publishing in the US Industry Report.pdf

[2] 51121C Business Analytics Enterprise Software Publishing in the US Industry Report.pdf

[3] 51 Information in the US Industry Report.pdf

[4] 51821 Data Processing & Hosting Services in the US Industry Report.pdf

[6] U.S. Generative AI Cybersecurity Market Growth Drivers, Trends 2034

[11] Top AI Security Vendors & Companies in 2025 – Akto

[12] United States Generative AI Cybersecurity Market Strengthens – openPR.com

[13] Tech Trends 2026 | Deloitte Insights

[14] 2025 Global Privacy, AI, and Data Security Regulations: What Enterprises Need to Know

[16] Managing Data Security and Privacy Risks in Enterprise AI | Frost Brown Todd

[17] December 2025 AI Executive Order: Impact on Data Security and Compliance – Kiteworks

[19] US AI Regulation Landscape 2025: Key Compliance Requirements for Businesses

[21] Recent Developments in Artificial Intelligence and Privacy Legislation in New York State

[22] AI Regulations in 2025: US, EU, UK, Japan, China & More – Anecdotes AI

[23] 9 AI Use Cases in Cybersecurity – SentinelOne

[24] AI Threat Detection: A Guide to Automated Cybersecurity – Teradata

[25] AI-Driven Cybersecurity: The Future of Intelligent Threat Defense | Seceon Inc.

[26] 8 Ways AI is Transforming Access Control in 2025 – Veza

[27] AI in Cybersecurity: Key Case Studies and Breakthroughs | by Eastgate Software | Medium

[28] AI and Privacy: Safeguarding Data in the Age of Artificial Intelligence | DigitalOcean

[30] 51121 Software Publishing in the US Industry Report.pdf

[31] Gartner Top 10 Strategic Technology Trends for 2026

[33] Top 13 AI Cybersecurity Use Cases with Real Examples – Research AIMultiple

[34] Real-World Examples of AI in Cyber Threat Detection | BitLyft

[35] AI Data Protection: Unpacking Fine-Grained Access Control – Squirro

[36] Top 14 AI Security Risks in 2025 – SentinelOne

[38] Cybersecurity trends: IBM’s predictions for 2025

[40] The Future of AI Data Security: Trends to Watch in 2025 – CyberProof

[41] AI Privacy Risks, Challenges, and Solutions – Trigyn Technologies

[42] AI Trust, Risk and Security Management Market Size to Hit USD 21.06 Billion by 2035

[43] Data & AI Lifecycle 101: Unpacking the Unique Stages, Tools, and Technologies

[44] Securing AI Data Across The Lifecycle – SECURITY.COM

[47] Data Privacy & AI Ethics Best Practices | Governance Guidance 2025 – TrustCommunity

[49] AI SecOps: Ensuring Security Throughout the AI/ML Development Lifecycle

[50] Top 8 AI Security Best Practices – Sysdig

[48] Anthropic Turns Inward to Show How AI Affects Its Own Workforce

[5] 9 Brands That Doubled Down On AI in 2025

[7] Why Midsize Companies Are Best Positioned to Thrive in the Age of AI

[8] Amazon’s Rufus Is Just the Beginning of AI Shopping

[9] Cybersecurity Firm CrowdStrike’s Earnings, Revenue Edge By Estimates

[10] Why Zscaler Stock Fell Despite The Cybersecurity Firm Posting Earnings Beat

[15] California lawmakers say they’ll keep pushing to regulate AI

[18] Trump’s Idea to Use an Executive Order on State AI Regulations Has 1 Key Problem

[20] The Ripple Effect of Clarity in Artificial Intelligence

[29] TCU bets big on AI with $10M Dell deal to reshape teaching and research

[32] Researchers Just Found a Big Security Flaw in Microsoft’s AI. Here’s Why Businesses Should Worry

[37] CEOs’ Biggest AI Fear Is Surprisingly Old School

[39] Google, Oracle, Amazon, Meta In Hot AI Race. But A Data Center Backlash Is Surging.